The Bug Apple Didn’t Want to Talk About

Here is the mechanism in plain English. Signal sends a push notification when a new message arrives. iOS renders that notification — which means iOS has to briefly hold the message content somewhere to display it. Even after the user deletes the message inside Signal, the notification payload lingered in a system cache. That cache was included in iCloud backups and local device backups.

Law enforcement did not break Signal’s encryption. They did not compromise Signal’s servers. They subpoenaed Apple for the backup. The backup contained the cache. The cache contained the content of messages the user had long since deleted. Ars Technica reported the patch on April 23, 2026 — Apple stopped weirdly storing data that let investigators access Signal chats. The fix was real. The press release was not.

Signal’s own threat model documentation is explicit about the boundary: Signal cannot protect against compromise of the operating system or the device itself. Most users never read that caveat. Most users assume “end-to-end encrypted” means “nobody can read this.” It means nobody can read it in transit. What happens on the device — caches, backups, screenshots, notification previews — is outside Signal’s perimeter.

This is the pattern this entire article will return to: not broken tools, but leaks at the joints. Signal did its job. iOS did its job. The leak happened in the handshake between them — in the seam that neither product’s threat model fully owned.

Every “Secure” Tool Has a Blind Spot — and Attackers Live in Them

Every serious privacy tool publishes a threat model. The threat model describes what the tool protects against — and, if you read carefully, what it does not. The list of “does nots” is where the real risk lives.

Tor does not protect against browser fingerprinting. A user running Tor with a standard browser and JavaScript enabled can be uniquely identified by canvas rendering, font metrics, and screen resolution before a single packet leaves the machine. VPNs do not protect against WebRTC leaks — a browser API that makes direct peer-to-peer connections using STUN requests that bypass the VPN tunnel at the OS level, exposing the real IP. Signal does not protect against OS-level caches, as demonstrated above. End-to-end encryption does not protect against the endpoint — if the device is compromised, the plaintext is readable before it is encrypted and after it is decrypted.

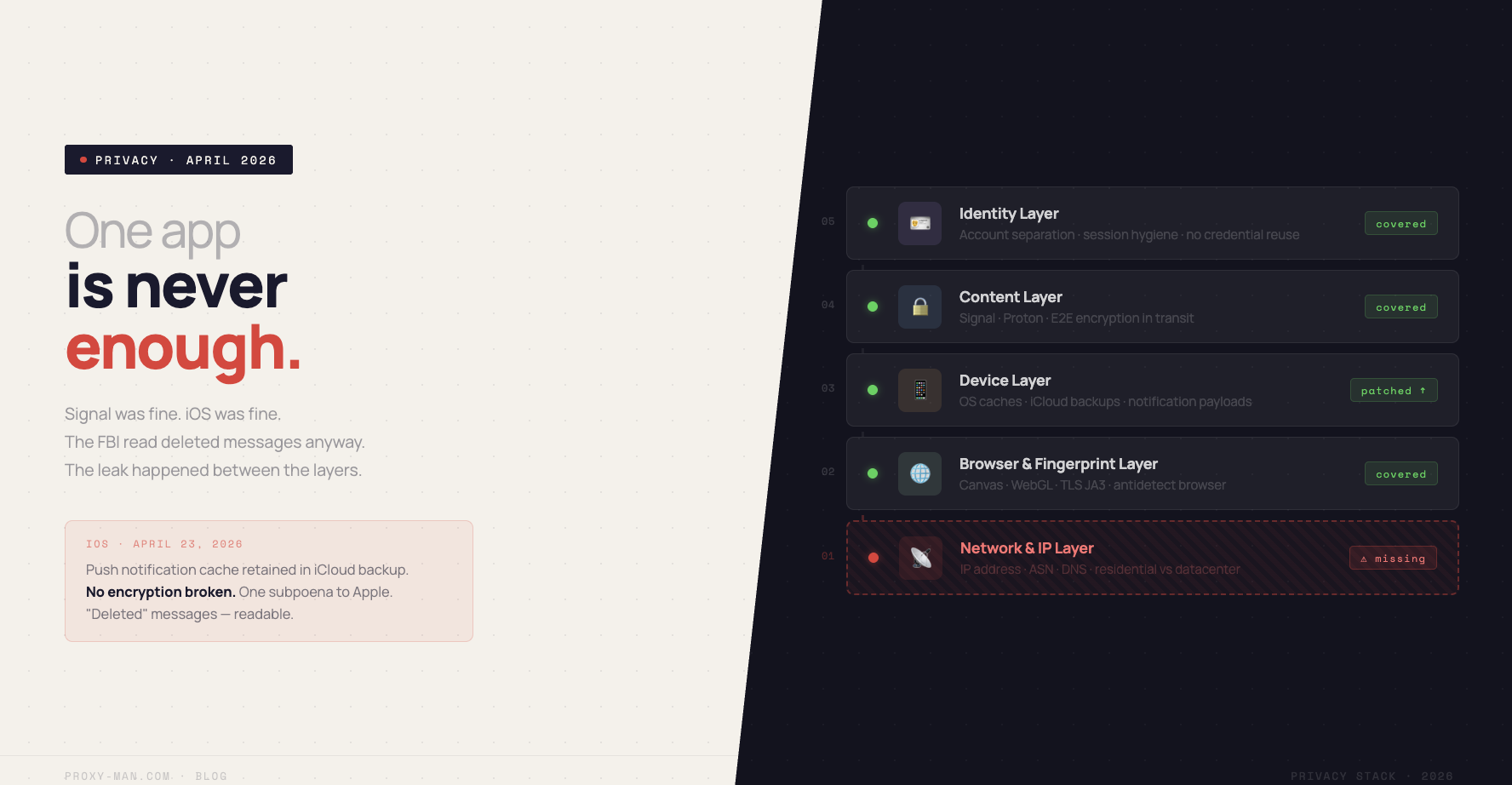

Think of privacy as a building with five floors:

- Content layer — what you say (E2E encryption: Signal, Proton Mail)

- App layer — how you browse (browser fingerprint, cookies, antidetect)

- Device layer — what your OS stores (backups, caches, notification previews)

- Network layer — where you appear to be (IP address, DNS, ASN classification)

- Identity layer — who you are across sessions (account linkage, behavioral fingerprint)

Leaks happen at the seams between floors — not inside the well-engineered rooms. Bruce Schneier put it in 2000 and it has not aged: security is a process, not a product. A silver-bullet single app is a category error. The question is never “is this app secure” — it is “which layer does this app cover, and what am I leaving naked.”

In April 2026, Citizen Lab identified two surveillance vendors abusing access to telecom signaling networks — SS7 and Diameter — to track phone locations worldwide without any user interaction or malware. No app vulnerability. No phishing. The attack surface was the carrier infrastructure that every phone silently participates in.

The Most Leaky Layer Isn’t the One You Think — It’s the Network

Most privacy advice focuses on the content layer — use Signal, use Proton, use a password manager. Some reaches the app layer — harden your browser, block trackers. Very few get to the network layer, which is arguably the most dangerous one in 2026 because it is the one platforms, advertisers, and government contractors rely on most heavily to link identities.

Three recent receipts that illustrate the scale of the problem:

First: in April 2026, the UK’s NCSC and international partners warned that Chinese state-aligned actors are increasingly using botnets of compromised consumer devices — home routers, IoT cameras — as proxy infrastructure to obscure attack origin. The implication runs both ways: if attackers can make malicious traffic look like residential broadband, the inverse is equally possible — legitimate users can be mistaken for infrastructure nodes, and residential IP reputation is actively being degraded by state actors piggybacking on it.

Second: the EFF’s Effector newsletter documented in April 2026 a case where a user’s data was handed to ICE without a judicial warrant and without notifying the user. The disclosure path ran through IP logs and account metadata held by a third-party platform — data that is retained as standard operating procedure by most consumer services, and that maps IP addresses to account identities with high precision.

Third: your IP address is the connective tissue that ties every “anonymous” account back to you. Meta’s and Google’s account-clustering systems use IP address as one of the strongest graph edges when linking separate accounts — confirmed repeatedly in Meta’s Coordinated Inauthentic Behavior transparency reports. Log in to a throwaway account from your home IP and you have already linked it to every other account you have ever opened from that address.

Most privacy guides skip the network layer because it is boring plumbing. It is also the layer that platforms and adversaries rely on first.

The Expat Who Thought a VPN Was Enough

Here is a Tuesday night, not a hacker thriller.

An engineer living in Berlin opens a new e-commerce site he has been meaning to check out. The homepage loads in German, flags his location, and offers delivery options for his neighborhood. He is running a free Chrome VPN extension. He assumed he was invisible.

He googles “why does the site still see my location with a VPN,” lands on a Hacker News thread, and the top comment is one word: WebRTC. He runs an online IP leak test. His real home IP is right there in the results — leaking cleanly around the VPN tunnel through his browser’s WebRTC implementation. The VPN encrypted his traffic. It did not stop his browser from making direct STUN requests that bypass the tunnel entirely at the OS level. This failure mode has been publicly documented since 2015 and remains endemic in free and “no-config” VPN browser extensions.

The Signal/FBI story and this story are the same story at different scales. In both cases: a trusted tool working as designed, a seam the user did not know existed, an identity leaking through the gap. The difference is scale of consequence — but the structural lesson is identical. Layered defense is not paranoia. It is the only model that works.

The Layered Privacy Stack, Built Like a Pro Would Build It

Here are the five layers, what each one solves, and — critically — what each one does not solve. The anti-pattern is stacking products without understanding overlap or gaps.

- Layer 1 — Content (Signal, Proton Mail, Proton Drive)

- Protects message content in transit and at rest on the provider’s servers. Does not protect metadata — who you messaged, when, how often. Does not protect against OS-level caches or device backups, as demonstrated above. Use it. Know its perimeter.

- Layer 2 — Identity and fingerprint (hardened Firefox, antidetect browser, separate profiles)

- Protects Canvas fingerprint, WebGL hash, TLS JA3 signature, installed fonts, screen resolution. Does not protect your IP — two profiles with different fingerprints but the same IP are trivially linkable. Antidetect is a real industry for a reason; know what it covers.

- Layer 3 — DNS (DNS-over-HTTPS, DNS-over-TLS, NextDNS, Cloudflare 1.1.1.1)

- Prevents your ISP from reading your DNS queries in plaintext. Does not hide your IP from the destination server. Does not protect against SNI leaks in TLS. A necessary hygiene step, not a privacy solution on its own.

- Layer 4 — Network and IP

- This is the layer most privacy guides either skip or hand off to a VPN and move on. VPNs are one shared endpoint with a data-center ASN — flagged automatically by Cloudflare Bot Management, PerimeterX, and every major anti-fraud system as non-residential traffic. Residential proxies put you behind a real consumer ISP address (Comcast, AT&T, Deutsche Telekom) — indistinguishable from a home user to MaxMind and to the platforms using MaxMind. Mobile proxies go further: CGNAT means your IP is shared by hundreds of real carrier subscribers simultaneously, making aggressive banning structurally impossible without collateral damage to legitimate users. This is the layer that determines which version of the web you see, whether your “separate” accounts are linked, and whether a subpoena to your ISP returns anything useful.

- Layer 5 — Operational separation (account hygiene, browser isolation, session discipline)

- Separate accounts for separate contexts. Separate browsers or containers for separate identities. Never reuse credentials across contexts. This layer is free and requires no software — it is entirely behavioral. It is also the layer that fails most often because it is inconvenient, and because one slip (logging into a personal account from a work context) undoes every technical measure above it.

The network layer — Layer 4 — is the one most privacy guides leave as a footnote. It is also the one that platforms, advertisers, and fraud-detection systems query first, because it is the fastest and cheapest signal they have.

Why This Matters Beyond the Tinfoil Crowd

The audience for this article is not only activists and dissidents — though the stakes are highest for them and the same tools serve them.

It is also the QA engineer who needs to validate checkout flows and pricing from twelve countries without burning the company’s corporate IP range and triggering geo-detection. The journalist researching a data leak who does not want her ISP’s logs handed over in response to a broad subpoena. The reseller who wants to purchase limited-release products without being profiled and blocked by a retailer’s purchase-velocity system. The developer running an AI agent that makes ten thousand API calls and gets rate-limited on the eleventh because all requests share the same datacenter IP.

The common denominator across all of them: each is one bug, one subpoena, one careless default setting away from being trivially identifiable. The Signal/Apple case is simply the most prestigious version of a universal problem. It happened to land in the news cycle because Signal is the app that privacy-conscious people trust most — which makes the lesson maximally legible.

Privacy in 2026 is not about hiding. It is about consent over which system sees which version of you. The content layer handles what you say. The network layer handles where you appear to be. Both matter. Only one of them gets covered in the average privacy guide.